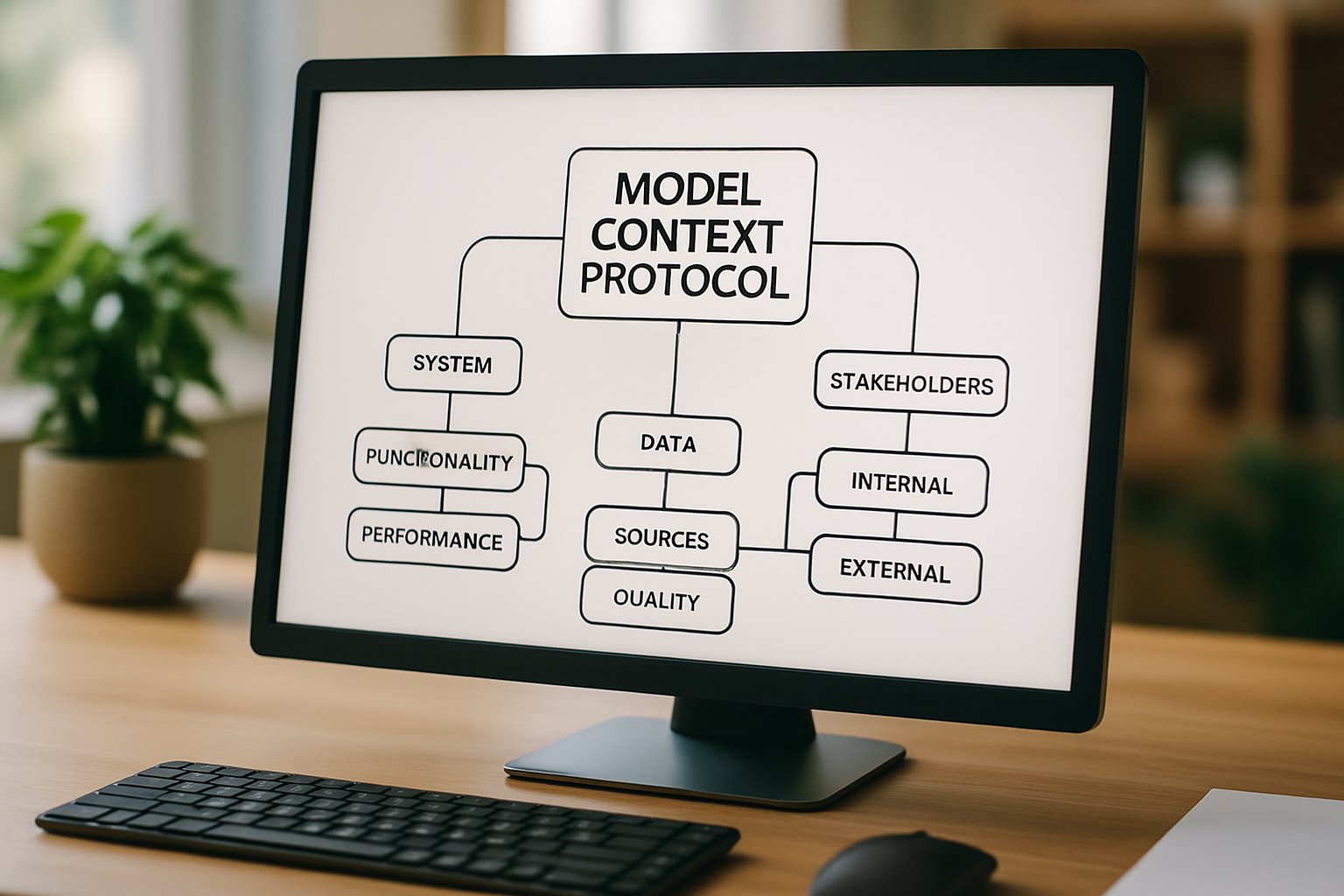

Model Context Protocol: Enterprise Blueprint For Domain Models

Generative AI has accelerated, yet chaos looms without clear guardrails. Enterprises now weigh accuracy, cost, and compliance in every deployment decision. The Model Context Protocol provides that missing backbone. It orchestrates models, policies, and telemetry so business leaders can scale AI responsibly. This guide presents a strategic framework that blends MCP strengths with Domain-Specific Language Models and AdaptOps from Adoptify.

Enterprise Market Shift Drivers

Market momentum favors Domain-Specific Language Models over general LLMs. Gartner ranks them as a top 2026 priority. Leaders cite sharper accuracy and reduced customization overhead. Consequently, executive boards demand concrete ROI, not demos. BCG data shows only a minority capture value because governance and workflow integration lag.

MCP addresses fragmented model estates. It routes traffic, enforces policies, and exposes observability. When paired with AdaptOps playbooks, enterprises gain auditable controls on day one. Therefore, the Model Context Protocol becomes the linchpin of sustainable ai adoption.

Key takeaway: DSLMs plus MCP shift AI from experimental cost center to regulated value engine. Next, we dissect the control plane.

Core Control Plane Essentials

An MCP implementation architecture must support multivendor routing, model lineage, and policy gating. Core modules include registry, policy engine, orchestration mesh, telemetry, and API gateway. Adoptify templates accelerate this baseline with predefined governance schemas.

FinOps telemetry inside the Model Context Protocol highlights usage spikes. Moreover, tiered risk policies throttle expensive calls when ROI dips. Enterprises can direct sensitive traffic to on-prem DSLMs, while non-sensitive flows hit cloud endpoints. That hybrid strategy keeps compliance intact and budgets sane.

Key MCP Core Modules

- Model registry with lineage tracking.

- Policy engine for RBAC and residency.

- Routing mesh for cost-aware selection.

- Telemetry dashboard with real-time SLOs.

- Execution manager for canary rollouts.

Summary: Align your MCP implementation architecture to governance, FinOps, and latency goals. The next section covers building domain models.

Building Robust Domain Models

Successful DSLMs start with data discipline. Teams curate consented corpora, annotate edge cases, and log provenance inside the Model Context Protocol. Continual pretraining, progressive fine-tuning, and distillation then yield compact yet accurate models.

Furthermore, engineers register model cards, test suites, and evaluation reports. Those artifacts feed deployment gates inside the MCP. Consequently, risk reviews become fast and repeatable. Domain-Specific Language Models now meet latency budgets and compliance thresholds.

In short, treat model lifecycle as code, governed by the protocol. We now turn to guardrails.

Governance And Runtime Guardrails

Regulated industries demand traceability. The Model Context Protocol enforces DLP simulations, output sanitization, and human-in-the-loop escalation. Adoptify’s governance kits supply templates for HIPAA, ISO, and SOC artifacts.

Moreover, real-time guardrails block toxic or off-policy responses. FinOps alerts surface runaway spend before invoices hit. Together, these controls reduce audit headaches and strengthen stakeholder trust.

Strategic Risk Checklist Summary

- Data residency mapped per dataset.

- Lineage and retraining records stored.

- Hallucination thresholds with auto-escalation.

- Workflow level cost attribution.

- User escalation paths defined.

Takeaway: Embed policy gates early; retrofitting later proves costly. Next, we explore scaling strategy.

Pilot Programs And Scale

Enterprises should launch 90-day pilots. Choose three measurable use cases—claims processing, knowledge search, or frontline copilots. Instrument baseline metrics inside the Model Context Protocol to compare DSLM output against legacy steps.

Additionally, use canary deployments and A/B testing features within the MCP implementation architecture. When KPIs clear predefined gates, promote models to production with a single policy flip. Adoptify ROI calculators help secure board funding by translating telemetry into dollars.

Lesson: Measured pilots create momentum and confidence. Finally, we focus on people.

People Focused Adoption Levers

Technology succeeds only when teams engage. Therefore, pair launch plans with role-based microlearning delivered through Adoptify’s in-app guidance. Interactive nudges show users how Domain-Specific Language Models enhance tasks without disrupting flow.

Meanwhile, analytics spotlight friction points. L&D leads then adjust content quickly. This virtuous loop accelerates ai adoption and cements culture change.

Wrap-up: Empower people, and workflows follow. Transitioning to our conclusion, we summarize and give next steps.

Actionable Change Management Steps

1. Form an AI Adoption Office.

2. Nominate model stewards per domain.

3. Schedule monthly telemetry reviews.

4. Refresh microlearning every quarter.

Frequently Asked Questions

- What is the Model Context Protocol (MCP) and how does it enhance AI governance?

The Model Context Protocol (MCP) centralizes AI model orchestration, policy enforcement, and telemetry tracking for scalable, secure deployments. It streamlines governance and integrates with Adoptify AI’s automated support for responsible digital adoption. - How do Domain-Specific Language Models (DSLMs) improve AI deployment for enterprises?

DSLMs deliver higher accuracy and efficiency by reducing customization overhead. Their integration with MCP ensures compliant, cost-effective AI workflows and enhanced operational governance, aligning with strategic digital adoption trends. - How does Adoptify AI facilitate digital adoption?

Adoptify AI leverages in-app guidance, role-based microlearning, and real-time user analytics to streamline AI adoption. Automated support and data-driven insights help minimize workflow disruptions, accelerating organizational transformation. - What role do pilot programs play in achieving successful AI adoption?

Pilot programs enable enterprises to test AI integrations, measure KPIs, and refine workflows. By using Adoptify AI’s MCP-driven framework and canary deployments, organizations can scale innovations efficiently while managing risks and costs.

FEATURED

7 Reasons To Embrace AI-Native Architecture

March 2, 2026

FEATURED

Hybrid AI FAQ: Strategy, Governance, and ROI

March 2, 2026

FEATURED

Agentic AI Integration Playbook for Enterprises

March 2, 2026

FEATURED

7 Ways AI Integration Redefines Business Automation

March 2, 2026

FEATURED

Agentic AI: Automating Finance Operations With Governance

March 2, 2026