AI Solution Development: Seven Steps to Optimize Processes

Enterprises chase automation gains, yet many never move beyond experiments. Leaders need clearer roadmaps and measured wins. Effective AI solution development unlocks those wins by rebuilding processes around data, people, and governance. However, missteps in pilots, training, or compliance can erase value fast. McKinsey, BCG, and Gartner warn that scale fails when people and process receive little attention. Meanwhile, Adoptify clients record up to 26 minutes saved per user during early pilots. Those gains expand once governance and learning frameworks mature. Readers will learn how to capture similar outcomes across HR, SaaS, and transformation teams. Consequently, the coming pages map a practical seven-step journey from experiment to enterprise adoption. Each step finishes with key takeaways so you can act immediately. Furthermore, examples cite finance and claims use cases to keep guidance concrete. Numbers come from public research and Adoptify telemetry, ensuring trustworthy benchmarks. Let’s begin by clarifying why many programs still stall after promising demos.

AI Solution Development Imperatives

Digitization created data lakes, yet workflows remain clogged with manual reviews. Therefore, executives demand optimization that cuts cycle time, error, and cost. GenAI and RPA finally bridge unstructured inputs and deterministic clicks.

However, technology alone delivers little. McKinsey shows 70 percent of value depends on redesigned processes and enabled workers. Consequently, any program must treat operating model transformation as first-class work.

- Clear business metrics linked to finance KPIs.

- Governance baked into every milestone.

- Role-specific training powered by microlearning.

Key takeaway: Value flows from process redesign, not magical models. Pair metrics, governance, and learning for success.

Next, we examine why companies still struggle to scale promising pilots.

Why Scaling Still Stalls

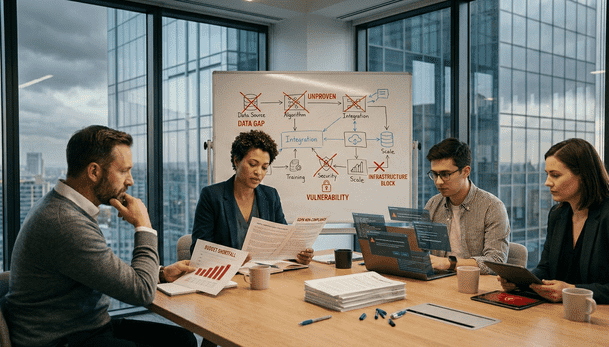

Surveys from BCG reveal 74 percent of firms cannot scale pilots into production. Leadership often celebrates demos without funding change management. Moreover, fragmented ownership breeds shadow AI and compliance headaches.

AI solution development stalls when teams ignore integration friction. Legacy systems lack APIs, and data quality issues inflate exception rates. Consequently, workflow errors spike and confidence drops.

Gartner adds that 77 percent of engineering leaders cite integration as a top challenge. These numbers prove that scale depends on cross-functional coordination, not just model accuracy.

Key takeaway: Scaling dies without ownership, integration plans, and funding.

With obstacles clear, we can unpack how AdaptOps removes them systematically.

AdaptOps Lifecycle In Action

Adoptify’s AdaptOps framework sequences Discover, Pilot, Scale, Embed, and Govern. Each stage carries gates, telemetry, and financial evidence. Therefore, sponsors gain transparency before releasing more budget.

During Discover, consultants map pain points and compliance demands. Pilot then launches a 90-day Copilot extension for 50–200 users. AdaptOps logs minutes saved, error reduction, and token usage daily.

AI solution development moves from theory to measurable outcomes within weeks. Moreover, governance starter kits inject DLP rules, bias tests, and rollback plans. Consequently, risk officers approve expansions faster.

As Scale kicks in, custom dashboards map productivity gains to NPV scenarios. Teams can adjust models, connectors, and prompts before broad release. That agility keeps momentum high.

Continuous telemetry also guides further AI solution development across new processes. Early wins often inspire requests for custom AI solutions in other departments.

Key takeaway: AdaptOps converts exploratory work into funded programs through gated evidence and built-in governance.

Next, we look at designing the right pilots to feed this lifecycle.

Designing High Value Pilots

Success starts with a narrow, high-volume process like invoice intake or claims triage. Baseline metrics include cycle time, error rate, and cost per transaction.

Teams should capture those baselines in finance-grade spreadsheets before coding begins. AI solution development then targets explicit percentage improvements, not vague hopes.

A proven pilot checklist includes:

- Six-week timeline with weekly executive demos.

- Clear go/no-go metric thresholds.

- Named data steward and product owner.

- User cohort of 50–200 for statistical power.

- Training plan covering microlearning and office hours.

Furthermore, Adoptify pilots embed ROI dashboards that finance teams trust. NPV modeling updates each week, ensuring early course corrections.

Key takeaway: Short, metric-driven pilots build credibility and surface integration issues early.

With pilots defined, we shift to the human factors that unlock scaling.

Human Learning Drives ROI

Even the best model fails when users lack confidence. Gartner surveys show workers save 90 minutes daily, yet 88 percent of firms see little value.

Adoptify addresses this gap with in-app guidance, role quizzes, and adoption champions. Microlearning surfaces just-in-time tips inside Copilot, trimming cognitive load.

Custom AI solutions need measurable adoption, not vanity clicks. Therefore, AdaptOps tracks cohort usage, minutes saved, and feature depth per persona.

This data feeds weekly leadership reviews and unlocks further AI solution development funding.

Key takeaway: Targeted training multiplies ROI and produces proof for skeptics.

Governance now becomes the next priority before enterprise rollout.

Governance At Every Gate

Regulated industries cannot risk hallucinations or data leakage. AdaptOps includes fairness tests, DLP connectors, and human-in-the-loop reviews by default.

Moreover, telemetry logs prompts, outputs, and drift metrics for each release. Compliance officers view evidence in a single dashboard, cutting audit prep time.

Such control ensures AI solution development aligns with policy rather than skirting it.

Consequently, leadership approves broader usage with less friction and greater trust.

Key takeaway: Robust governance turns potential blockers into champions by exposing real-time compliance evidence.

Finally, leaders must tie all progress to hard numbers.

Financial Modeling For Confidence

Forrester TEI studies show Copilot programs return 106–300 percent ROI when modeled properly. However, those gains disappear without disciplined cost tracking.

Adoptify cost estimators outline license, training, and maintenance modules across pilot, scale, and steady state. Teams run sensitivity ranges to reveal upside and downside scenarios.

Custom AI solutions gain funding when finance trusts the numbers. Therefore, weekly dashboards feed automatic NPV refreshes.

This rigor accelerates next-phase AI solution development across adjacent workflows.

Key takeaway: Transparent models de-risk investment and pave the way for continuous improvement.

We end with guidance on sustaining momentum.

Conclusion

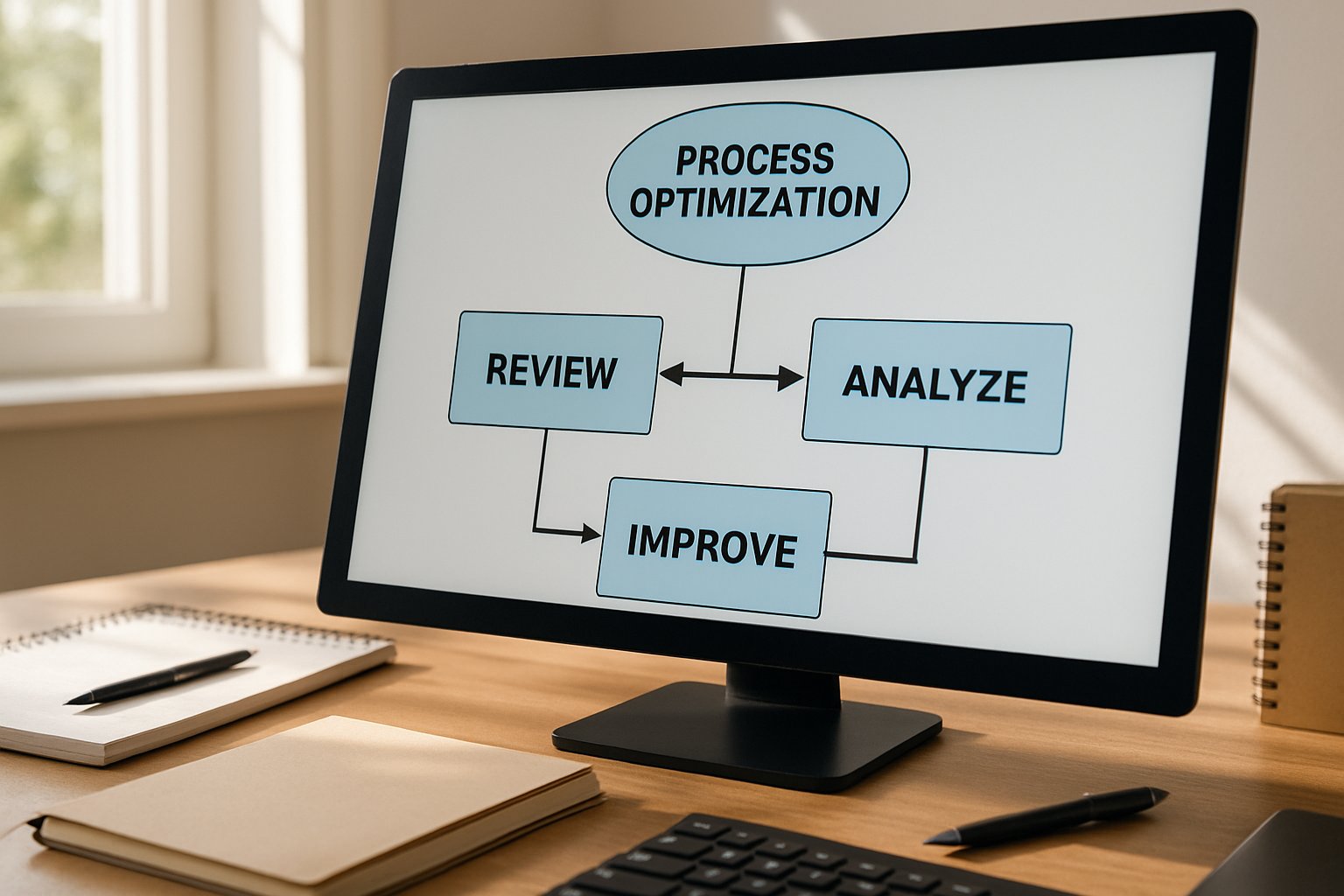

Process optimization demands discipline across metrics, people, and governance. The seven steps above show how AI solution development delivers measurable ROI when managed through AdaptOps. Clear pilots, continuous learning, and finance-grade dashboards turn experiments into enterprise value.

Why Adoptify AI? The platform pairs AI-powered digital adoption with interactive in-app guidance. Intelligent analytics reveal user friction while automated workflows remove clicks and delays. Faster onboarding, higher productivity, and enterprise-grade security scale confidently across the organization. Visit Adoptify today to streamline processes and unlock ROI.

Frequently Asked Questions

- How does process redesign drive successful AI solution development?

Process redesign is key because it aligns business metrics, governance, and continuous learning. AdaptOps from Adoptify AI uses digital adoption and in-app guidance to transform pilots into scalable, ROI-driven solutions. - How does Adoptify AI help overcome integration challenges during scaling?

Adoptify AI combats integration challenges by offering automated support, user analytics, and clear in-app guidance. Its AdaptOps framework ensures seamless connectivity, reducing errors and cycle time for efficient scaling. - How do targeted training and in-app guidance boost user adoption?

Targeted training and in-app guidance empower users to reduce friction and enhance productivity. By employing role-based microlearning, AdaptOps from Adoptify AI builds user confidence and drives faster digital adoption. - What role does robust governance play in advancing AI projects?

Robust governance ensures compliance, minimizes risks, and validates performance through continuous telemetry. With built-in fairness tests and automated support, Adoptify AI secures enterprise AI adoption and streamlines complex workflows.

FEATURED

How to Identify and Overcome Cultural AI Adoption Barriers

March 3, 2026

FEATURED

What Are the Most Common AI Adoption Challenges for Businesses

March 3, 2026

FEATURED

The Complete Guide to Building an AI Adoption Framework for 2026

March 2, 2026

FEATURED

Who Owns the Intellectual Property in Enterprise AI Adoption

March 2, 2026

FEATURED

7 Reasons To Embrace AI-Native Architecture

March 2, 2026