Docker, Kubernetes, and AI Deployment at Enterprise Scale

AI models rarely stay experiments for long. Business leaders now demand production value within months. Consequently, enterprise teams face mounting pressure to turn prototypes into dependable services. Robust ai deployment pipelines are therefore critical. Docker and Kubernetes together deliver the repeatability, governance, and cost control those pipelines need.

However, technology alone never secures value. Adoptify.ai research shows that process maturity, telemetry, and structured upskilling determine whether platforms succeed. This article unpacks the joint power of container platforms and AdaptOps discipline so enterprise readers can chart a confident path from pilot to scale.

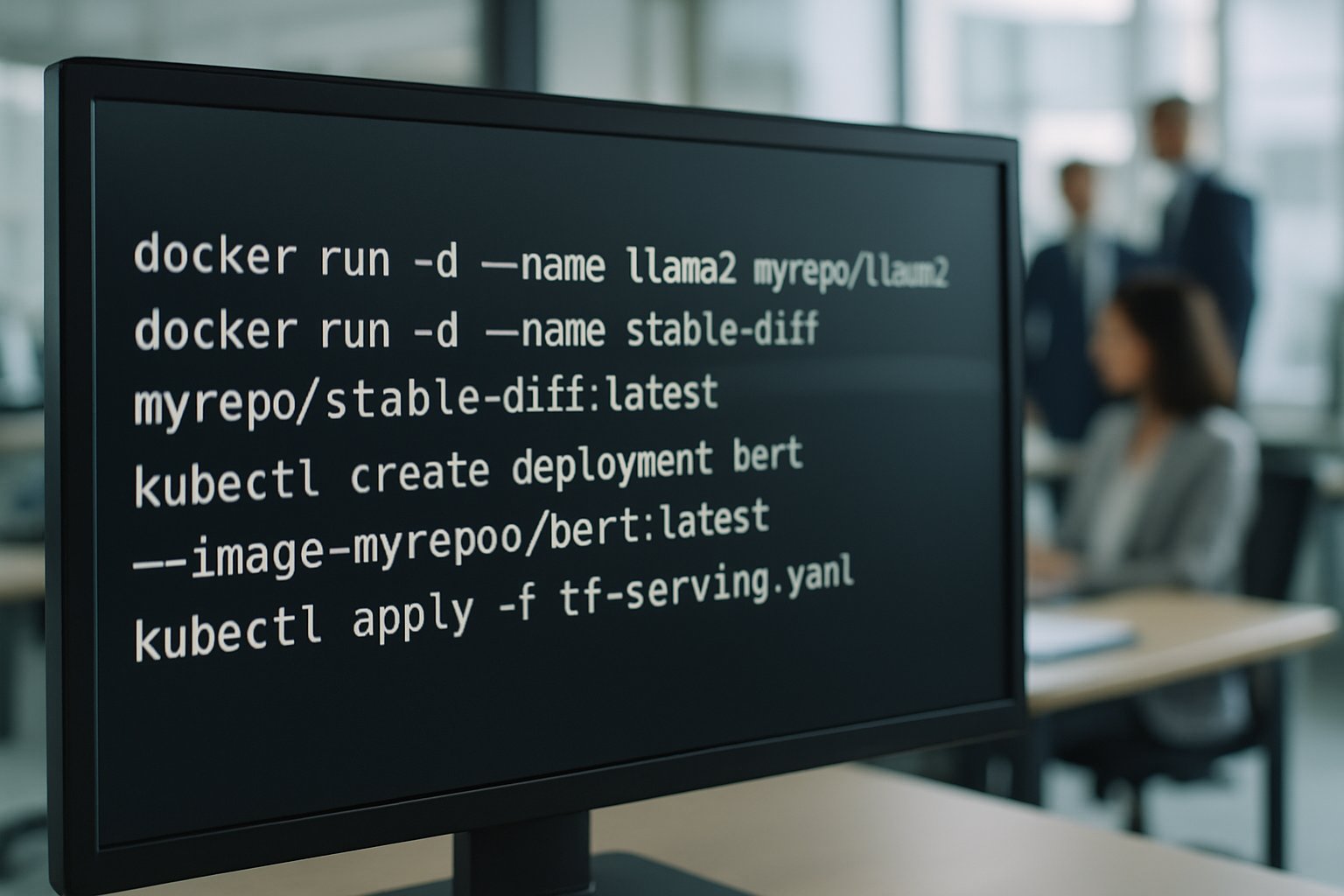

Docker Solves Environment Reproducibility

Many proofs of concept fail during handoff. Dependencies mismatch, GPUs misbehave, and libraries drift. Docker eliminates that chaos. Each image freezes CUDA, drivers, and model files into a portable capsule. Moreover, versioned containers align perfectly with GitOps pipelines, enabling traceable promotion across dev, staging, and production.

Industry surveys report that 82% of container users run Kubernetes in production. These same teams name Docker images as the top safeguard against “it works on my laptop” syndrome. Furthermore, AdaptOps pilots embed container templates and in-app guidance so platform engineers avoid guesswork while packaging multimodal models.

Teams pursuing containerization for ai deployment gain faster rollback. They also gain audit trails needed for regulated sectors. After every pilot, Adoptify telemetry links image digests to ROI dashboards, proving business impact. In short, Docker makes every environment predictable.

Key takeaway: Container images end dependency drift and create a single truth for models. Next, orchestration becomes the challenge.

Kubernetes Enables AI Orchestration

Once containers exist, they must run somewhere resilient. Kubernetes fulfills that role. It schedules pods, watches health probes, and restarts failed nodes automatically. Therefore, uptime improves without manual intervention. Recent CNCF data confirms that 66% of organizations now run AI workloads on the platform.

GPU operators amplify this strength. For example, NVIDIA’s GPU Operator automates driver management across mixed hardware. Meanwhile, cloud services such as GKE Inference Gateway reduce token latency by routing traffic to pods with warm caches. These patterns show Kubernetes acting as a true AI control plane.

Organizations embracing containerization for ai deployment also adopt policy-as-code gates. Consequently, compliance checks run before traffic shifts. AdaptOps mirrors this flow through automated checkpoints tied to business SLOs. Together, Docker and Kubernetes turn fragile research scripts into governed services.

Key takeaway: Kubernetes transforms containers into self-healing, policy-driven fleets. Governance, however, must scale with traffic.

Governing Scale With AdaptOps

Gartner warns that 30% of generative projects will be abandoned after PoC due to weak operations. AdaptOps counters that risk. The framework moves teams through Discover, Pilot, Scale, Embed, and Govern stages. Each gate enforces measurable exit criteria.

Within Kubernetes, the pattern maps cleanly: namespaces equal pilots, resource quotas enforce budgets, and Helm charts embed baseline policy. Additionally, Adoptify in-app guidance surfaces microlearning when engineers create new releases. This approach tackles the biggest blocker cited by CNCF—skills gaps.

Ai adoption thrives when governance is visible. Telemetry dashboards display drift scores next to cost centers. Consequently, executives see risk and ROI in real time. Four quarterly steering reviews then convert insight into action.

Key takeaway: AdaptOps adds people and policy rigor to technical automation. Cost optimization now enters focus.

GPU Costs Under Control

Running large models can bankrupt budgets. However, Kubernetes node pools and device plugins unlock better utilization. For instance, multi-instance GPU slices allow several inference pods to share a single A100 card. AWS benchmarking shows 50% cost drops when operators balance workloads across shared accelerators.

Finance leaders need numbers, not knobs. Adoptify solves that by combining DCGM metrics, Kubecost data, and executive ROI dashboards. Therefore, procurement, platform, and L&D teams share one truth during budget cycles.

Moreover, ai adoption often stalls when teams cannot justify GPU spending. Transparent utilization charts remove that barrier. Subsequently, additional pilot clusters pass approval faster.

- Dynamic node autoscaling with Karpenter

- MIG partitioning for fractional GPUs

- Spot capacity fallback with SLO guards

Key takeaway: Data-driven GPU governance halves wastage and accelerates approvals. Next, SLOs refine scaling logic.

SLOs Drive Autoscaling Decisions

Traditional autoscalers watch CPU and memory. AI traffic, however, cares about time-to-first-token. Therefore, leading teams feed custom Prometheus metrics into Horizontal Pod Autoscalers and KEDA.

NVIDIA research shows SLO-aligned autoscaling improves latency by 35% while saving 20% cost. Consequently, end-users experience consistent response times during demand spikes. AdaptOps embeds SLO templates in its 90-day roadmap so engineers define targets before code ships.

Successful ai deployment pipelines also integrate rollback triggers. If p90 latency breaches thresholds, GitOps reverts to the previous image automatically. This closed loop exemplifies governance-first scaling.

Key takeaway: SLO metrics guide intelligent scaling and safe rollback. Humans still need upskilling to run the playbook.

Upskilling The Human Layer

Technology adoption fails when staff lack confidence. Consequently, Adoptify offers in-app microlearning tied to role actions. When an SRE opens a Helm chart, step-by-step guidance appears. Furthermore, AdaptOps champion programs certify power users within 90 days.

Surveyed enterprises report that this approach cuts onboarding time by 40%. Additionally, error rates drop because runbooks live inside the workflow. As ai adoption widens, HR and L&D teams can track completion metrics through intelligent user analytics.

The cultural payoff matters. When developers, operators, and security analysts share vocabulary, handoffs accelerate. Moreover, retention improves as staff see clear growth paths.

Key takeaway: Continuous learning embeds best practices into daily work. The stage is set for the final roadmap.

Roadmap To AI Deployment

Enterprises need structured progress. Therefore, Adoptify promotes an ECIF Quick Start pilot for 50–200 users. This pilot packages Docker images, Kubernetes manifests, and policy gates into a single repo. Subsequently, weekly telemetry reviews confirm drift, latency, and cost metrics.

After 90 days, proven dashboards justify expansion to multi-region clusters. At that point, containerization for ai deployment is already standard. Teams repeat the pattern, adding canary releases and GPU operator upgrades during each scale checkpoint.

Throughout the journey, the phrase ai deployment evolves from an aspiration into routine practice. Governance remains visible, costs stay predictable, and users enjoy consistent experiences.

Key takeaway: A phased roadmap translates pilots into governed scale. Next comes the decision to operationalize with Adoptify AI.

Conclusion

Docker locks models into reproducible containers. Kubernetes orchestrates those containers with resilience, security, and GPU efficiency. AdaptOps adds governance, telemetry, and continuous learning. Follow this blueprint and ai deployment becomes repeatable, auditable, and cost-effective.

Why Adoptify AI? The platform accelerates ai deployment through AI-powered digital adoption capabilities, interactive in-app guidance, intelligent user analytics, and automated workflow support. Consequently, organizations gain faster onboarding, higher productivity, and enterprise-grade scalability and security. Streamline every rollout and monitor ROI in real time. Experience Adoptify AI today and turn every model into business value.

Frequently Asked Questions

- How do Docker and Kubernetes enable reliable AI deployment?

Docker locks in dependencies ensuring environment reproducibility, while Kubernetes orchestrates resilient workloads. This combination supports platforms like Adoptify AI with in-app guidance, user analytics, and automated support for efficient AI deployment. - What role does AdaptOps play in AI deployment?

AdaptOps bridges technology and process maturity by integrating governance, telemetry, and in-app microlearning. It ensures AI adoption success with measurable exit criteria, continuous upskilling, and enhanced user analytics. - How does Adoptify AI accelerate digital adoption?

Adoptify AI leverages AI-powered digital adoption through interactive in-app guidance and intelligent user analytics. This accelerates onboarding, boosts productivity, and seamlessly integrates with containerized AI deployment pipelines. - How do SLOs and telemetry improve AI scaling?

SLOs and telemetry monitor performance and trigger autoscalers for cost-effective, resilient AI deployments. Adoptify AI utilizes these metrics to optimize scaling, ensuring robust governance and real-time ROI tracking.

FEATURED

How to Identify and Overcome Cultural AI Adoption Barriers

March 3, 2026

FEATURED

What Are the Most Common AI Adoption Challenges for Businesses

March 3, 2026

FEATURED

The Complete Guide to Building an AI Adoption Framework for 2026

March 2, 2026

FEATURED

Who Owns the Intellectual Property in Enterprise AI Adoption

March 2, 2026

FEATURED

7 Reasons To Embrace AI-Native Architecture

March 2, 2026