Microsoft reports that nearly 80% of the Fortune 500 now deploy AI-powered agents across operations, development, and customer workflows. While adoption accelerates, cybersecurity leaders warn that an expanding Agentic Visibility Gap threatens governance, compliance, and enterprise security.

AI agents now draft reports, manage infrastructure, automate coding, and support internal decision-making. However, many organizations struggle to track how these systems access data, interact with applications, and retain memory. As a result, the Agentic Visibility Gap the disconnect between AI deployment and oversight continues to widen.

This shift marks a turning point in enterprise technology strategy. AI agents no longer serve as experimental tools. Instead, they function as operational actors with real permissions, access pathways, and decision-making capabilities.

Rapid Adoption Across the Fortune 500

Microsoft’s data suggests that AI agents have moved from pilot programs to production environments at remarkable speed. Enterprises integrate agents into customer service, financial analysis, cybersecurity monitoring, and software development pipelines.

Because these systems operate autonomously, companies gain efficiency and scalability. However, rapid deployment often outpaces governance controls. Consequently, the Agentic Visibility Gap grows when organizations fail to map agent permissions and interactions.

Many Fortune 500 companies empower departments to implement AI solutions independently. While this decentralized model accelerates innovation, it also increases exposure to Shadow AI unauthorized or unsanctioned AI systems running outside formal IT oversight.

Shadow AI Intensifies the Agentic Visibility Gap

Shadow AI poses one of the most pressing enterprise risks today. Employees often deploy AI agents through cloud platforms or SaaS integrations without notifying security teams. As a result, organizations lose clear sight of which agents access sensitive systems.

The Agentic Visibility Gap expands when agents operate in silos. For example, a marketing team might deploy an AI assistant connected to customer databases. Meanwhile, a development team could integrate a coding agent with repository write access. Without centralized tracking, security teams cannot assess cumulative risk.

Furthermore, AI agents frequently integrate across platforms. They may access CRM systems, analytics dashboards, and cloud storage simultaneously. Therefore, even small visibility lapses can create large exposure surfaces.

Memory Poisoning: A New Enterprise Threat

Experts warn that Shadow AI and memory poisoning risks widen the Agentic Visibility Gap.

Beyond access control, AI agents introduce memory-related vulnerabilities. Many modern systems store contextual data to improve future responses. However, attackers can manipulate these memory stores.

Memory poisoning occurs when malicious inputs alter an agent’s retained knowledge. Over time, corrupted memory can influence outputs, recommendations, or automated actions. Consequently, the Agentic Visibility Gap includes not only access tracking but also monitoring agent memory integrity.

Enterprises that overlook this risk may face subtle but significant operational disruptions. For instance, poisoned memory in a procurement agent could distort vendor evaluations. In cybersecurity contexts, manipulated memory might weaken threat detection processes.

As adoption expands across the Fortune 500, memory governance becomes essential to maintaining trust in agent-driven systems.

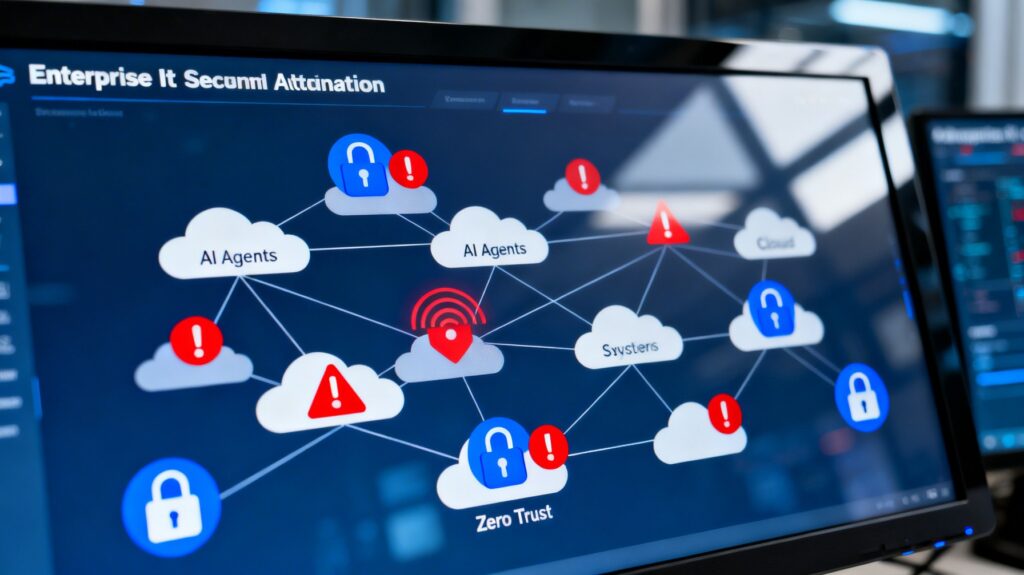

Zero Trust and Agent Oversight

Many enterprises adopt Zero Trust architectures to strengthen security. Under Zero Trust, organizations verify every access request and limit privileges by default. However, AI agents challenge traditional models.

Agents often require broad access to function effectively. They pull data from multiple systems, execute scripts, and modify workflows. If organizations fail to adapt Zero Trust principles for AI, the Agentic Visibility Gap widens.

Security leaders now advocate for agent-specific Zero Trust policies. These include:

-

Continuous authentication for agent activities

-

Granular permission scopes

-

Real-time logging of automated actions

-

Behavioral anomaly detection

By embedding Zero Trust principles into AI deployment, companies can reduce blind spots. Nevertheless, implementation remains uneven across industries.

Why the Agentic Visibility Gap Matters

The Agentic Visibility Gap affects more than cybersecurity. It also impacts compliance, audit readiness, and reputational risk. Regulators increasingly scrutinize automated decision systems. If enterprises cannot document how agents operate, they may face legal exposure.

Additionally, insurers evaluate AI governance practices when underwriting cyber policies. A poorly managed agent ecosystem could increase premiums or limit coverage.

Operational resilience also depends on visibility. When agents malfunction, organizations must quickly identify root causes. Without centralized oversight, troubleshooting becomes difficult and costly.

Therefore, closing the Agentic Visibility Gap requires collaboration between IT, compliance, and executive leadership.

Governance Frameworks for Agent Deployment

To address emerging risks, enterprises are adopting structured AI governance programs. These frameworks focus on mapping agent inventory, monitoring permissions, and auditing activity logs.

Companies increasingly turn to platforms such as Adoptify ai to implement centralized oversight. Governance solutions help organizations document policies, enforce controls, and evaluate risk exposure continuously. By formalizing processes, enterprises can narrow the Agentic Visibility Gap before incidents occur.

Effective governance strategies typically include:

-

Comprehensive agent inventory management

-

Role-based access controls

-

Continuous compliance monitoring

-

Incident response planning tailored to AI systems

-

Memory auditing to prevent manipulation

As Microsoft’s data highlights rapid adoption, governance tools must evolve just as quickly.

Executive Awareness and Board-Level Oversight

The scale of deployment across the Fortune 500 elevates AI governance to the boardroom. Directors increasingly request transparency regarding AI risk management.

When executives cannot quantify how many agents operate across departments, the Agentic Visibility Gap becomes a strategic liability. Boards now seek regular reporting on AI inventory, data access patterns, and anomaly detection results.

Moreover, cross-functional coordination proves essential. IT teams must collaborate with legal departments to evaluate regulatory exposure. Security leaders must educate product teams about secure agent architecture.

As AI agents continue to reshape workflows, governance becomes an enterprise-wide responsibility.

Looking Ahead: Standardization and Regulation

Industry groups and policymakers are discussing standards for AI accountability. Although regulations remain in development, expectations around transparency continue to rise.

The Agentic Visibility Gap may drive calls for mandatory reporting frameworks. Regulators could require enterprises to document agent permissions and monitoring practices.

Meanwhile, technology vendors invest in enhanced audit features and monitoring dashboards. Microsoft and other providers increasingly emphasize responsible AI deployment guidelines.

However, tools alone cannot solve the challenge. Organizations must cultivate a culture that treats AI agents as managed digital actors rather than invisible utilities.

Conclusion: Adoption Outpaces Oversight

Microsoft’s finding that 80% of the Fortune 500 deploy AI agents signals a dramatic transformation in enterprise operations. Yet this acceleration exposes a widening Agentic Visibility Gap.

Shadow AI, memory poisoning, and evolving Zero Trust requirements complicate governance efforts. Without proactive oversight, enterprises risk losing control over automated systems that influence core processes.

To remain secure and compliant, organizations must integrate structured governance into every phase of AI deployment. Visibility, accountability, and continuous monitoring now define responsible innovation.

For further insight into enterprise AI risk trends, read our previous coverage on the Agent Credential Breach impacting Moltbook and the broader implications for AI Security governance.